Why Are We Still Manually "Polishing" AI-Generated Content in 2026?

If we go back to 2022, when we first encountered AI tools that could instantly generate thousand-word articles, we probably thought the era of industrialized content production had finally arrived. Four years later, reality is much more complex than anticipated. In the SaaS industry, especially within marketing and operations teams targeting global markets, a common phenomenon is that AI-generated article drafts almost invariably require a round of manual “fine-tuning.” Internally, we jokingly call this process “polishing,” but its essence goes far beyond adjusting a few words.

From “Prompt Engineering” to “Process Engineering”

Initially, teams invested significant effort into so-called “Prompt Engineering.” We continuously optimized instructions, trying to get AI to output content that better aligned with brand tone and had more depth. At one point, we thought we had found the “golden template.” However, problems quickly emerged: even with the most perfect prompt, the produced content still revealed a series of subtle but critical issues before actual publication.

These issues were rarely grammatical errors or obvious logical flaws—AI is already quite reliable in those areas. More often, they were “soft flaws” difficult to preemptively avoid through instructions: lacking genuine insight when interpreting the latest developments in a specific niche industry, merely reiterating information; using overly generalized examples when arguing points, missing the real pain points in the specific scenarios our clients face; even, when involving data or trend predictions, producing seemingly reasonable but actually dangerous conclusions based on outdated or incomplete information.

We gradually realized the problem wasn’t “how to ask,” but “how to use.” Generating content itself is just one step. If this step is viewed in isolation, all subsequent human interventions become repetitive, high-cost repair work. The real solution is embedding AI content generation into a more complete, smarter workflow, making “understanding needs, tracking trends, generating drafts, verifying facts, optimizing expression, adapting for publication” a coherent automated process. This is no longer “Prompt Engineering,” but “Process Engineering.”

The Missing Insight: AI’s “Information Blind Spots”

AI tools are trained and generate based on vast historical data, giving them broad knowledge. But in the rapidly evolving SaaS field, true value often lies not in breadth, but in precision and foresight. AI has inherent “blind spots” in the following areas:

- Immediacy of Industry Micro-Trends: The latest competitive landscape, tech stack changes, and customer feedback trends in an emerging SaaS niche (like compliance automation tools for specific verticals in 2026) often first appear in specialized industry forums, startup tech blogs, or analysts’ latest briefings. These highly dispersed, unstructured “hotspot” pieces of information are difficult for general AI models to capture in real-time and understand their significance.

- Specificity of Brand Narrative: Every SaaS company has its unique growth path, customer success stories, and technical philosophy. AI-generated content easily falls into generic industry jargon templates, lacking the ability to transform the company’s specific experiences into compelling stories. This “specificity” requires using the brand’s own knowledge base (past case studies, product iteration logs, customer interview records) as the core context for generation.

- Adaptation to Cross-Market Cultural Contexts: When generating content for different regional markets, it’s not just about language translation. Business practices, regulatory environments, and how local competitors are referenced all require deep localization knowledge. AI-generated drafts often need extensive manual correction here to avoid cultural misunderstandings or commercial faux pas.

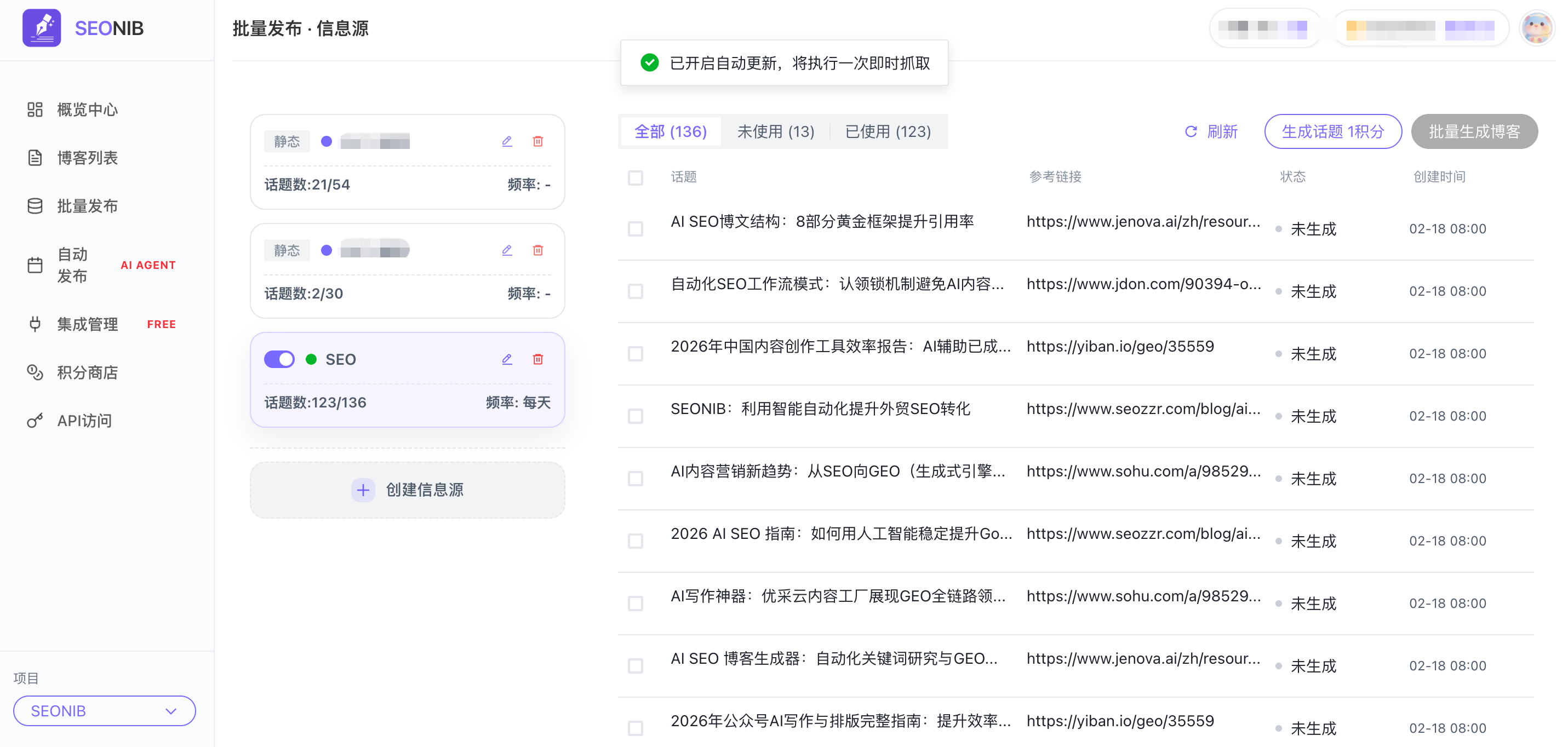

To address these issues, we began seeking solutions that could deeply integrate “real-time hotspot tracking” with “content generation.” This means the tool needs to actively scan and parse sources in our specified domains, understand emerging topics, and prioritize them as context for content generation. In practice, we use platforms like SEONIB precisely because their workflow design includes “tracking industry hotspots” as a preliminary step. It doesn’t just generate articles based on keywords; it attempts to understand what the industry is discussing at the current moment, then builds content around these real discussion focal points. This somewhat narrows the “information blind spots,” making the generated content’s starting point closer to the real pulse.

Verification and Publishing: The Overlooked “Last Mile”

Even if content passes muster in insight and relevance, two critical steps are often underestimated before final publication: fact verification and publishing adaptation.

Fact verification is especially crucial in SaaS content. Content involving product performance data, integration solutions, API changes, pricing model comparisons, etc., has an extremely low tolerance for error. An article containing outdated or incorrect technical details directly damages the brand’s professional credibility. The ideal workflow should automatically cross-check against the company’s latest internal product documentation, knowledge base, or designated authoritative sources during or after generation, flagging or automatically correcting potentially conflicting information points.

Publishing adaptation relates to efficiency. The generated content ultimately needs to be placed into a CMS (Content Management System), possibly requiring adaptation to specific template formats, adding appropriate meta tags, configuring multilingual versions, or distributing to different publishing channels (website blog, third-party tech communities, email newsletters, etc.). If this step still requires manual copying, pasting, and formatting adjustments, then the efficiency gains from automation are significantly consumed just before the finish line.

Therefore, a complete AI content operations workflow must include a closed loop from generation to safe, compliant publication. Higher automation shifts the role of human editors from “tinkerers” to “strategic decision-makers” and “final quality gatekeepers,” allowing them to focus on imbuing content with true strategic intent and creative spark.

The Evolution of Human Roles: From Creator to Curator

One profound change brought by this process is the transformation of the content team’s role. Editors or marketing operations personnel are no longer the “sole creators” of an article. They are more like “curators” or “conductors.”

Their core work becomes:

- Defining Strategy and Boundaries: Determining the thematic direction, target audience, and core messaging for content series, and setting clear knowledge boundaries and credible sources for AI.

- Injecting Real Insight and Emotion: Adding real stories, unique perspectives, and emotional warmth from frontline customer service, sales feedback, or product development onto the framework of AI-generated content. This is currently difficult for AI to replace.

- Executing Final Quality Arbitration: Making final judgments and fine-tuning based on their professional experience regarding the commercial accuracy and brand consistency of the content.

- Managing and Optimizing the Process itself: Continuously observing the output effectiveness of the AI workflow, adjusting sources for hotspot tracking, rules for verification checks, and templates for publishing adaptation, making the entire system increasingly smarter and more reliable.

This transformation liberates human resources, allowing us to focus more on high-value strategic thinking and creative work, rather than being inundated with repetitive basic writing tasks. It doesn’t aim to completely replace humans with AI, but to build a new paradigm of “human-machine collaboration,” where both complement each other’s strengths.

FAQ

Q1: Will AI-generated content lead to uniform website content style, losing brand personality? A: If AI generation tools are used in isolation and lack input from brand-specific knowledge bases and human strategic guidance, this risk indeed exists. The key is treating AI as an execution engine, not a strategic brain. Teams need to provide AI with clear brand narrative frameworks, exclusive success case libraries, and core value proposition descriptions, using these as “mandatory context” for content generation. Simultaneously, the final human editing stage must be responsible for injecting unique perspectives and emotion.

Q2: How can we ensure the accuracy of data and facts in AI-generated content to avoid legal or reputational risks? A: This requires establishing a “verification” step in the workflow. Best practice is configuring tools to automatically access or compare against designated authoritative sources, such as the company’s latest product technical documentation, official announcements, recognized industry standard documents, etc. For critical data or claims, the system should flag discrepancies with sources or directly block publication, requiring human review. We cannot rely on AI’s “common sense”; we must establish a verification mechanism based on reliable sources.

Q3: For multilingual markets, how can AI content generation effectively handle deep localization, rather than simple translation? A: Simple language translation cannot solve localization problems. Two levels of support are needed: first, the tool itself should possess knowledge bases for the cultural and commercial contexts of specific regional markets, considering local conventions, regulations, and competitive environments during generation; second, there must be involvement from local market teams or experts who can provide localized keywords, case references, and review and adjust generated drafts from a cultural context perspective. Localization is a collaborative “generation + review” process.

Q4: Will fully automated content production cause us to miss some deep topics only humans can identify, which aren’t hot trends? A: Yes, automated hotspot tracking and generation primarily serve timely and scalable content needs. Deep analyses,前瞻 predictions, or disruptive viewpoints based on long-term industry observation and cross-domain thinking碰撞 currently still require human leadership. Automated systems should be viewed as efficiency tools covering basic content needs and maintaining information freshness, thereby freeing up more time and energy for human teams to focus on these more strategically valuable deep content creations. The two should be complementary.